I am a Ph.D. student at Angela Dai’s 3D AI Laboratory, at the TU Munich. Currently, I am focusing on in-the-wild indoor scene understanding challenges, including segmentation, retrieval, captioning, and reconstruction. Over the last years, I have worked at the NVDIA DVL team and am currently with the XRScene team at Meta Zurich.

I received my Bachelor’s from TU Budapest, and my Master’s through a double degree program at TU Berlin and ELTE Budapest majoring Computer Science of Autonomous Systems. Previously, I worked on robotics and vehicle localization, then during my master’s on augmented reality, SLAM, and real-time semantic reconstruction challenges.

Research

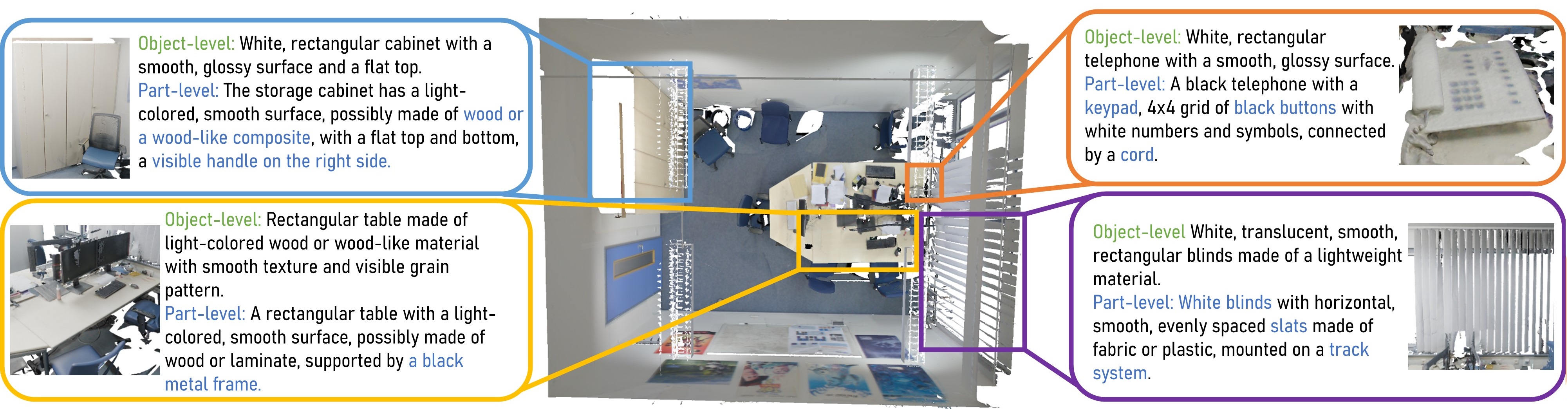

| ExCap3D: Expressive 3D Scene Understanding via Object Captioning with Varying Detail Image Chandan Yeshwanth, Dávid Rozenberszki, Angela Dai ICCV 2025 project page | paper | code ExCap3D introduces as a 3D captioning model that generates fine-grained part-level descriptions and conditions object-level captions on them, ensuring semantic consistency and similarity in the latent space. To enable this task, the ExCap3D Dataset is constructed using a visual-language model on ScanNet++. ExCap3D achieves superior performance over state-of-the-art methods, improving Cider scores by 17% at the object level and 124% at the part level. |

| DiffCAD: Weakly-Supervised Probabilistic CAD Model Retrieval and Alignment from an RGB Image Daoyi Gao, Dávid Rozenberszki, Stefan Leutenegger, Angela Dai SIGGRAPH 2024 project page | paper DiffCAD is a weakly-supervised approach for CAD model retrieval and alignment from an RGB image. This approach utilzes diffusion models to tackle the ambiguities in the monocular perception, and achives robuts cross-domain performance while only trained on synthetic dataset |

| Dávid Rozenberszki, Or Litany, Angela Dai CVPR 2024 paper | video | project page UnScene3D is the first fully unsupervised 3D learning approach for class-agnostic 3D instance segmentation of indoor scans. We first generate pseudo masks by leveraging self-supervised color and geometry features to find potential object regions. UnScene3D operates on a basis of geometric oversegmentation, enabling efficient representation and learning on high-resolution 3D data. The coarse proposals are then refined through self-training our model on its own predictions. |

| | Dávid Rozenberszki, Or Litany, Angela Dai ECCV 2022 paper | video | project page We present the ScanNet200 benchmark, which studies 200-class 3D semantic segmentation – an order of magnitude more class categories than previous 3D scene understanding benchmarks. To address this challenging 3D segmentation task, we propose to guide 3D feature learning by anchoring it to the richly-structured text embedding space of CLIP for the semantic class labels. This results in improved 3D semantic segmentation across the large set of class categories. |

| 3D Semantic Label Transfer in Human-Robot Collaboration Dávid Rozenberszki, Gábor Sörös, Szilvia Szeier, András Lőrincz ICCVW 2021 paper | code We present a system for sharing and reusing 3D semantic information between multiple agents with different viewpoints. We first co-localize all agents in the same coordinate system. Next, we create a 3D dense semantic model of the space from human viewpoints close to real time. Finally, by re-rendering the model's semantic labels from the ground robots' own estimated viewpoints and sharing them over the network, we can give 3D semantic understanding to simpler agents. |

| Demo: Towards Universal User Interfaces for Mobile Robots Dávid Rozenberszki, Gábor Sörös Augmented Humans Conference 2021 paper | video | bibtex We demonstrate the concept and a prototype of automatically generated virtual user interfaces for mobile robots. Human(s) and robot(s) are co-localized in the space via their own on-board navigation and shared spatial anchors. The robot sends the description of context-aware user interface elements to a head-mounted display, which renders the virtual widgets around and seemingly attached to the physical robot. |

| LOL: Lidar-only Odometry and Localization in 3D point cloud maps Dávid Rozenberszki, András Majdik ICRA 2020 paper | video | code We deal with the problem of odometry and localization for Lidar-equipped vehicles driving in urban environments, where a premade target map exists to localize against. In our problem formulation, to correct the accumulated drift of the Lidar-only odometry we apply a place recognition method to detect geometrically similar locations between the online 3D point cloud and the a priori offline map. In the proposed system, we integrate a state-of-the-art Lidar-only odometry algorithm with a recently proposed 3D point segment matching method by complementing their advantages. |

Teaching

- Seminar for 3D Machine Learning - Winter 2021, Technical University of Munich

- Advanced Deep Learning for Computer Vision: Visual Computing - SS22, WS22, SS23, WS23 Technical University of Munich